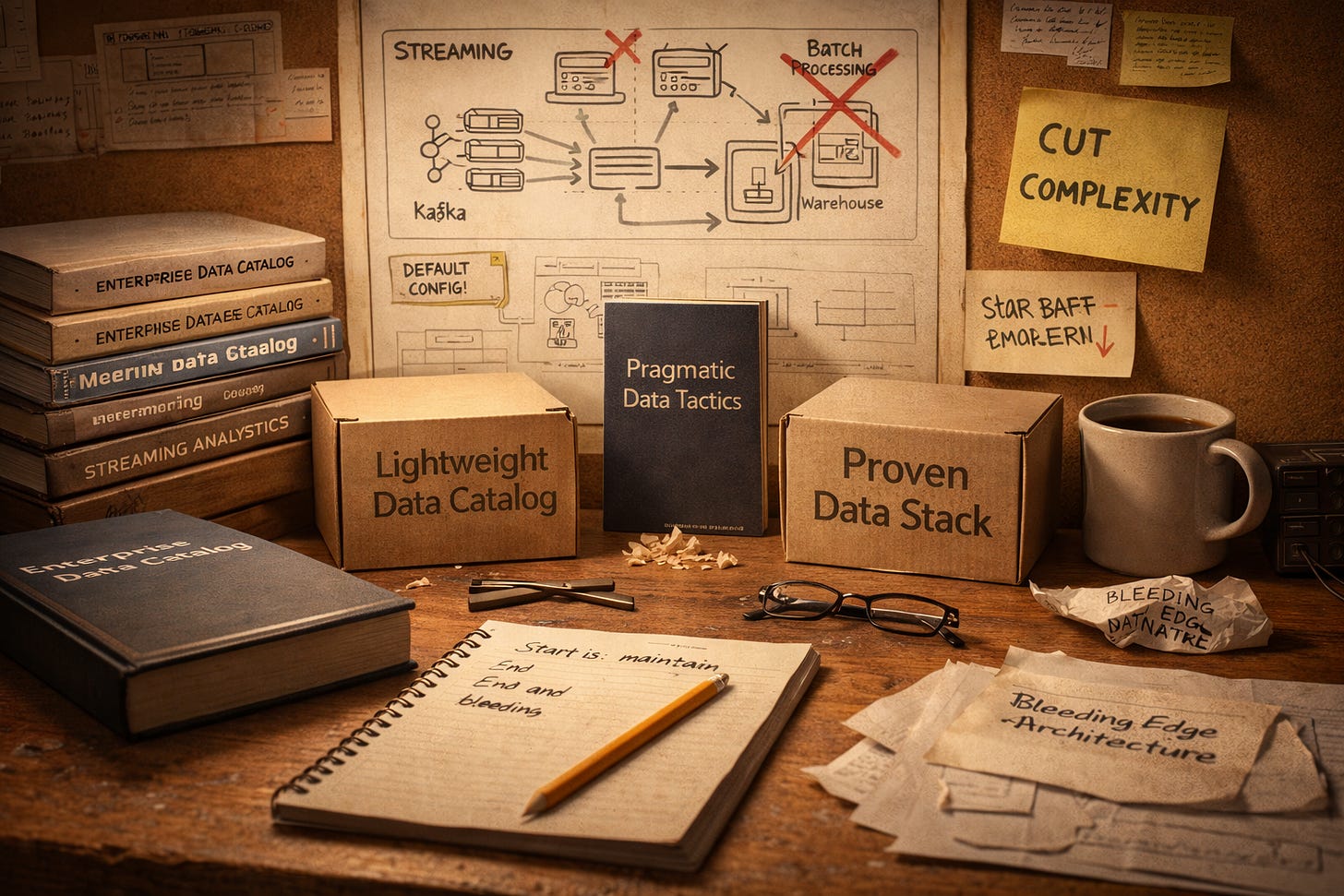

The Pragmatist’s Playbook

The Data Report - Week ending January 11, 2026

The data community spent this week asking uncomfortable questions. Why do data catalogs keep failing? When does real-time actually matter? What’s the minimum viable stack for a team of three?

The answers shared a theme: complexity isn’t delivering. Teams are pushing back on the default assumptions that have guided data infrastructure decisions for years. Enterprise catalogs with thousand-feature checklists are losing ground to tools you can deploy in an afternoon. Streaming pipelines are getting scrutinized for their cost-per-insight. And small teams are building on proven components rather than chasing the next platform shift.

This week we cover four stories of pragmatism winning over ambition: the catalog adoption problem, the return of design-first thinking, the freshness question, and the rise of the SMB data stack.

The Catalog Paradox

Simpler choices, better outcomes: this week’s discussions suggest the data catalog problem isn’t technical.

Data catalogs have been promising to solve the “source of truth” problem for over a decade. The pitch is compelling: centralize metadata, enable discovery, enforce governance. Yet adoption remains stubbornly low. Industry research shows only about 16% of organizations qualify as truly data-driven, and over 70% of data initiatives never make it past the pilot stage. Why?

This week’s Reddit discussion on catalog adoption surfaced the usual suspects: maintenance burden, cost, limited UX for business users, and simple tool fatigue. One commenter described building a lightweight tool to auto-ingest metadata from databases and BI tools, then realizing they’d essentially recreated a catalog. The pattern is familiar: teams want catalog benefits without catalog overhead.

Enter tools like Marmot, which proposes a catalog without the complex infrastructure. The thesis: if deployment takes an afternoon instead of a quarter, adoption follows. It’s a bet that the problem was never features, but friction.

The row-level lineage discussion added another dimension. Traditional catalogs track table and column lineage, but teams processing data through 10-20 steps need to trace individual records. The options, blockchain-style logs or compact bitmasks, both have trade-offs. It’s a reminder that governance needs aren’t static; they evolve with pipeline complexity.

The pragmatist’s takeaway: catalog failure isn’t about picking the wrong vendor. It’s about mismatched complexity. Start with what you can maintain.

Design-First Returns

When code writes itself, design becomes the bottleneck.

For years, the data community favored code-first development. Write the SQL, infer the docs, let lineage tools figure out the relationships. It worked when humans were the bottleneck. But with AI generating code faster than teams can review it, the calculus has changed.

This week’s discussion on design-first approaches argues for a return to upfront modeling: define data contracts, establish lineage, and document semantics before writing transformation code. The reasoning is practical: AI-generated code creates governance bottlenecks. If you don’t know what a field means before the model runs, you won’t know afterward either.

The concept isn’t new. Industry voices have been pushing semantic layers and data contracts for years. Recent developments like the Open Semantic Interchange initiative, with Snowflake, Salesforce, and dbt Labs collaborating on “semantic glue,” suggest the infrastructure is maturing. A data model is a semantic agreement, defining what entities exist, how they relate, and what rules govern integrity. Without that agreement, you’re debugging meaning alongside code.

One weekend project shared on Reddit demonstrated the design-first principle applied to AI chatbots. Instead of letting LLMs write SQL, the author exposed prewritten, vetted queries as tools via MCP, with user-provided filter parameters. Business rules stay encoded in the queries, not hallucinated by the model. It’s a small example of a larger pattern: constrain the AI with design, not prompts.

The Agentic Patterns repository that surfaced this week reinforces the point. Its catalog of production-tested agent patterns includes an entire section on governance and safety: human-in-the-loop approvals, chain-of-thought monitoring, egress lockdown. These aren’t afterthoughts. They’re design decisions that shape how agents operate.

The Freshness Question

Real-time is expensive. The question is whether it’s worth it.

“What’s the purpose of live data?” asked a Reddit thread this week. The community’s answer was nuanced: tie data freshness to decision latency. If a recommendation must adapt within seconds, stream. If a board report needs to reconcile perfectly every morning, batch. The 2025 Data Streaming Report shows 86% of IT leaders citing streaming investments as a priority, but Gartner research suggests batch processing remains dominant for many use cases.

The cost difference is real. Streaming systems require always-on infrastructure, meaning 24/7 compute bills. Batch systems run in predictable bursts, easier to budget and scale. Uber’s transition from batch to Flink-based streaming cut data freshness from hours to minutes, but Uber operates at a scale where minute-level freshness directly accelerates model launches and experimentation velocity. Most teams don’t.

Another thread asking about real-time ingestion from multiple sources explicitly excluded off-the-shelf connectors. The implication: teams want streaming capabilities without the platform lock-in that typically comes with them. It’s a common tension.

The Hidden Cost Crisis in Data Engineering discussion connected the dots. Tool sprawl, brittle pipelines, and cloud waste are driving up costs. Real-time isn’t exempt. Every streaming pipeline that doesn’t justify its latency requirements is a cost center.

The pragmatist’s framework: start with the decision, not the technology. What’s the tolerable latency? What’s the reliability target? If the answer is “hours” and “eventually consistent,” batch wins.

The SMB Stack

Small teams are building data infrastructure. The playbook is simpler than you’d think.

The modern data stack promised democratization: warehouse, pipeline, transformation, visualization, accessible to any team with a credit card. For enterprises, this meant architectural debates and vendor evaluations. For SMBs, it meant a different question: what’s the minimum I can build and still get value?

This week’s BigQuery/Airbyte/Looker strategy post walked through the calculus. Sources: Shopify Plus, GA, Xero, SKIO. Warehouse: BigQuery. ETL: Airbyte, with a path to self-hosting later. BI: Looker for joining spreadsheets with warehouse data. The approach: limit data scope (150k orders/year, skip line items) to keep BigQuery cheap. The concern: cloud lock-in and surprise cost spikes.

A thread on BI tools for non-technical teams asked for 2026 recommendations: drag-and-drop dashboards, minimal SQL, native connectors to CRM and accounting. The requirements signal where SMB data maturity has landed. Teams aren’t asking whether to build analytics. They’re asking which tool lets business users self-serve without hiring a data engineer.

Building a Data Warehouse from Scratch showed a newcomer proposing a full lakehouse architecture: Bronze raw S3, Silver Iceberg tables via dbt and Glue, Gold BI views, Trino for queries, Airflow for orchestration. The community’s response was measured: maybe simpler is better for a team of one.

The pattern across these discussions: enterprise-grade tools are accessible, but enterprise-grade complexity isn’t necessary. The hidden cost of the SMB tech stack isn’t the tools; it’s piecing together too many of them. Start with what you can maintain, add when you hit limits.

The Thread

The thread running through this week’s discussions: the data community is getting practical. Not cynical, not conservative, but clear-eyed about what complexity costs and what simplicity enables.

Data catalogs aren’t failing because vendors build bad software. They’re failing because teams can’t absorb the overhead. Real-time isn’t overrated. It’s just not free, and the ROI depends on how fast you actually need to act. SMBs aren’t building toy stacks. They’re building proportionate ones.

The pragmatist’s playbook isn’t about doing less. It’s about matching solutions to problems. Start with what you can maintain. Add when you hit real limits. Skip the features you’ll never use.