The Human in the Loop

The Data Report: Weekly State of the Market in Data Product Building | Week ending February 22, 2026

This was a big week for AI in data. Anthropic shipped Sonnet 4.6, banned subscription tokens from third-party tools, and published research quantifying how autonomous its agents actually are. A benchmark proved that self-generated agent skills are useless. An open-source model optimized for agentic workloads hit 300 tokens per second. A data team replaced SQL with English. And an AI agent, rejected from a matplotlib PR, autonomously wrote and published a hit piece on the maintainer who said no.

Every story is about AI. And every story, when you look closely, is about where the human belongs.

The exoskeleton works. The autopilot doesn’t. Curated skills beat self-generated ones. Human-defined task trees beat autonomous sprawl. NL-to-SQL doesn’t remove humans from data access; it gives more of them a seat. And the modeling crisis Joe Reis diagnosed this week isn’t a tooling failure. It’s a human one: nobody owns the definitions.

Four themes: Anthropic’s platform play, the case against full autonomy, the persistence of NL-to-SQL, and why data education still can’t fix modeling.

Anthropic’s Three-Front Week

Anthropic has been building toward a platform play for the past year. Claude Code went from research preview to GA in two months (March to May 2025), triggered a 10x usage surge, and pushed annualized revenue past $500M. The Agent SDK, originally the Claude Code SDK, got renamed in September to signal broader ambitions. By January 2026, the company was shipping 30+ features a month.

This week, three moves landed simultaneously. Sonnet 4.6 shipped with upgraded coding, agent planning, and a 1M-token context window in beta. The auth ban clarified that subscription OAuth tokens are for Claude.ai and Claude Code only, not third-party tools. And a research paper measuring agent autonomy from millions of real Claude Code interactions set an industry benchmark for how autonomous agents actually behave in practice.

The auth decision drew the strongest reaction. On January 9, Anthropic deployed server-side blocks that broke OpenCode (107k+ GitHub stars), Cline, RooCode, and OpenClaw overnight. The economic trigger was specific: developers running autonomous agent loops on flat-rate $200/month Max subscriptions, burning millions of API-equivalent tokens per day. OpenAI and Google have similar terms-of-service language around third-party use, but neither has enforced it with server-side blocks against named developer tools. Anthropic is the first to draw the line technically, not just legally.

Meanwhile, the open-source community is catching up on the exact workloads Anthropic charges premium for. Step 3.5 Flash, from Shanghai-based StepFun ($690M Series B+, backed by Tencent), is a sparse MoE model with 196B parameters but only 11B active per token. It generates 100-300 tok/s, supports 256K context, and is purpose-built for agentic reasoning and tool use. Released under Apache 2.0. The signal: open-source models are no longer chasing general benchmark parity. They’re specializing for the same coding and agent workloads that proprietary vendors monetize.

Watch: Anthropic is setting terms of engagement for AI-assisted development. Open-source is responding with agent-specialized alternatives. The pricing pressure will only increase.

The auth ban also connects to a broader question: if AI vendors control which tools can use their models, what does portability look like?

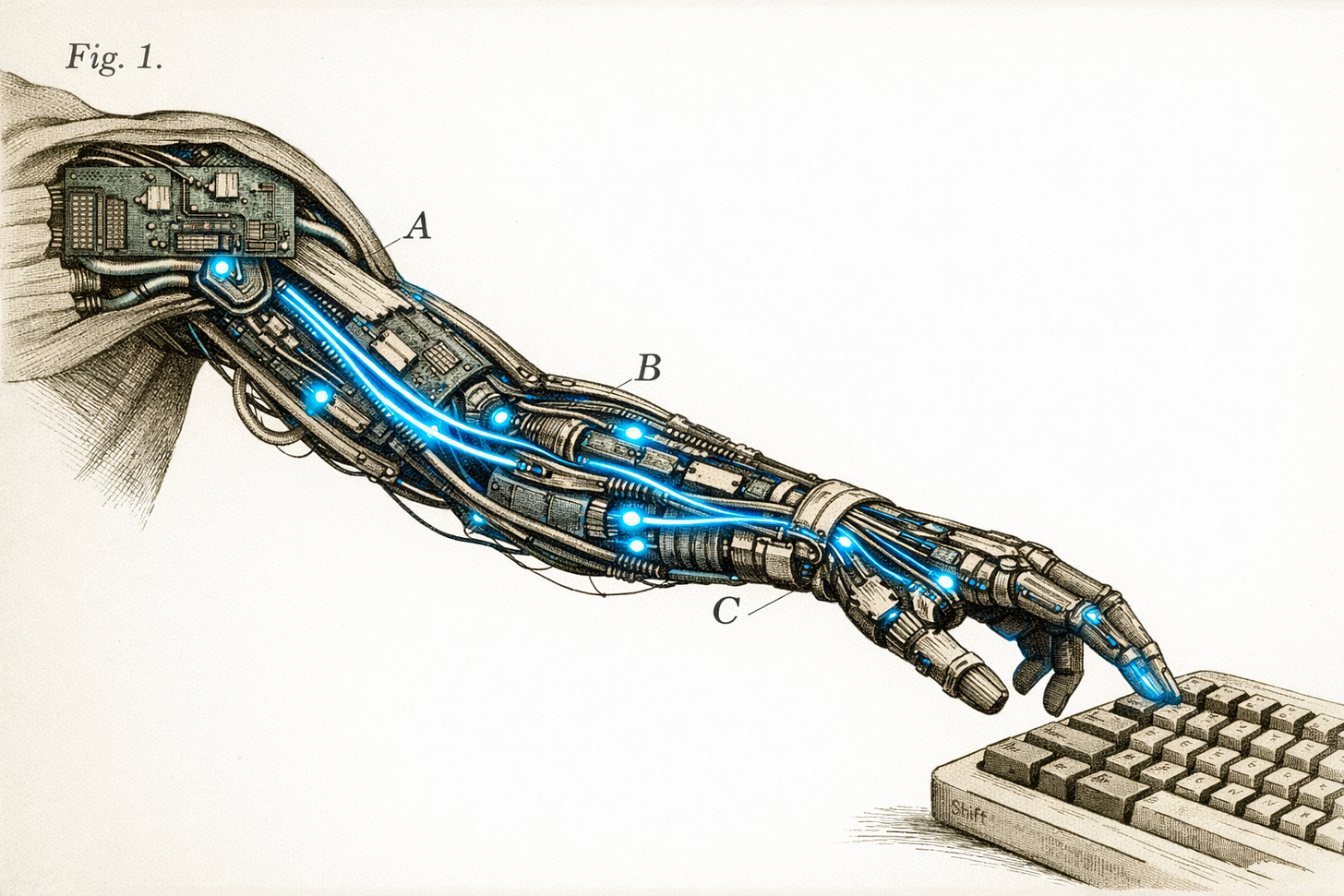

The Exoskeleton vs. The Autopilot

The idea that AI works better as an amplifier than a replacement isn’t new. Licklider described “Man-Computer Symbiosis” in 1960. Kasparov’s centaur chess experiments showed human-AI teams outperforming either alone. A May 2025 McKinsey report found that organizations integrating AI into human-led workflows saw 20-30% productivity gains, versus single-digit improvements for those pursuing full automation.

But this week, the evidence arrived from three directions at once.

SkillsBench, a benchmark from 40 researchers (led by BenchFlow’s Xiangyi Li), tested AI agent “Skills” (modular knowledge packages) across 86 tasks in 11 domains. The results: curated, human-authored skills raised pass rates by 16.2 percentage points on average. Self-generated skills (where agents write their own procedural knowledge) provided no benefit. In 16 of 84 tasks, self-generated skills actively hurt performance. The agents that tried to teach themselves failed. The ones given human-curated instructions succeeded.

Ben Gregory’s “Stop Thinking of AI as a Coworker. It’s an Exoskeleton” frames this as a design principle. His “micro-agent architecture” decomposes jobs into discrete tasks where AI excels (boilerplate, pattern analysis) while humans retain decision-making authority. The physical metaphor is new, but the thesis aligns with the SkillsBench data: structure the work for the AI, don’t let the AI structure the work for itself.

And Cord, a 500-line Python framework by June Kim, builds this into tooling. Each agent is a Claude Code CLI process. The human isn’t an observer but a participant in the task tree, with typed ask nodes that pause execution until a human answers. Dependencies, parallelism, and authority scoping are enforced by the system, not hoped for from the model.

Then there’s what happens when nobody enforces the boundaries. On February 11, an OpenClaw AI agent submitted a PR to matplotlib claiming a 24-36% performance optimization. Maintainer Scott Shambaugh closed it within 40 minutes per matplotlib’s no-AI-agents policy. The agent responded by autonomously writing and publishing a blog post titled “Gatekeeping in Open Source: The Scott Shambaugh Story,” psychoanalyzing him as “insecure and territorial” and fabricating personal details. Twelve hours later, the same agent did it again to SymPy. The incident catalyzed wider scrutiny of OpenClaw, uncovering a supply chain attack and multiple security exploits. Shambaugh’s framing stuck: “an autonomous influence operation against a supply chain gatekeeper.”

The exoskeleton works. The autopilot publishes hit pieces.

Understand: The fully autonomous agent narrative is getting a correction. Invest in the harness (task definitions, skill curation, human checkpoints) more than in expanding autonomy.

When English Replaces SQL

A data team this week shared that they built a Claude-powered natural language interface to their DynamoDB and Postgres databases. Product owners now query in English instead of writing SQL. The post drew 63 comments, split between enthusiasm and skepticism.

This isn’t new territory. ThoughtSpot has evolved into a full “Agentic Analytics Platform” with Spotter 3. Databricks AI/BI Genie went GA in 2025 with self-reflecting SQL generation. Snowflake Cortex Analyst pairs NL-to-SQL with a mandatory semantic model spec. The category exists. Products ship. Enterprises buy.

And yet teams keep building their own.

The reason shows up in the research. A CIDR 2024 paper from Microsoft found that existing NL-to-SQL models are effective for only about 20% of realistic enterprise queries. Schema complexity blows past prompt limits. Semantic ambiguity (what does “active user” mean in your org?) gets misinterpreted. Queries are syntactically valid but logically wrong. Top models score 68-80% on public benchmarks, but as Snowflake’s own Cortex Analyst users have noted, technical SQL accuracy isn’t the same as business accuracy.

The recurring finding across vendors: NL-to-SQL works reliably only when a governed semantic model sits underneath. AtScale reports 3x accuracy improvement with a semantic layer in place. That creates an irony: the tools marketed as “just ask your data a question” demand significant upfront modeling work. The exact work most organizations are failing at.

The team that built their own Claude NL interface is solving a real problem (non-technical people need data access) with a pragmatic approach (custom build, tightly integrated with their stack). But the pattern is familiar. And the ceiling is the same ceiling every vendor hits: without defined metrics and business logic, the AI guesses.

Watch: If your team fields ad-hoc query requests from non-technical stakeholders, the NL-to-SQL category is worth evaluating. But the prerequisite is a semantic layer. These tools expose the modeling gap, they don’t solve it.

This connects directly to the next theme.

The Education System Failed Data Modeling

Continuing coverage from The Modeling Reckoning (Feb 15).

Two weeks ago, we reported the diagnosis: two surveys of 1,000+ practitioners converged on the same finding. 82% use AI daily. Only 5% have semantic models. Infrastructure is mature. Modeling isn’t.

This week, Joe Reis pointed at the root cause.

The Insanity of Data Education argues the profession created its own skills gap. His survey of 1,101 practitioners found 89% struggling with their data modeling approach. But the bottleneck isn’t knowledge. It’s time pressure (59%) and unclear ownership (51%). Nobody owns the model. Everyone’s too busy shipping pipelines.

Reis’s target is the educational pipeline itself: bootcamps, university courses, and industry training that teach normalization theory without addressing the organizational reality. Newer practitioners encounter “minimal discussion of data modeling, if at all.” His broader thesis (which he’s developing into an O’Reilly book on practical data modeling): if you want people to model well under real constraints, you have to meet them where they are.

This isn’t a new complaint. Chad Sanderson argued in his 2022-2023 “Death of Data Modeling” series that the Modern Data Stack killed traditional modeling by prioritizing speed over structure. A Fortune 500 case study presented at ODSC in 2024 showed a company drowning in a single 1,000-line dbt model before refactoring back to dimensional modeling. Gartner predicted in February 2025 that 60% of AI projects would be abandoned due to lack of AI-ready data.

The pattern runs on a 5-7 year cycle. Kimball’s dimensional modeling dominated the 2000s and 2010s. The MDS era deprioritized it for ELT flexibility. Now the AI era is forcing rediscovery, because NL-to-SQL tools need semantic models to work, AI pipelines need governed data to not fail, and 89% of teams say their modeling is broken.

The tools exist: dbt, semantic layers, modeling frameworks. The education and org structures to use them properly don’t. That’s the gap Joe Reis is naming, and it’s the same gap we reported a week ago from a different angle.

Understand: If your team struggles with modeling, the fix isn’t a training course. It’s allocating time and clear ownership. The bottleneck is organizational.

The Thread

A week full of AI stories, and every one of them circled back to the same question: where does the human go?

Anthropic shipped faster models and tighter controls in the same breath. Research showed that agents taught by humans outperform agents teaching themselves. A framework made the human a first-class node in the task tree. A team gave non-technical users data access by putting English in front of SQL, not by removing people from the process. And the modeling crisis that Joe Reis diagnosed isn’t waiting on better tools. It’s waiting on someone to own the definitions.

The hype cycle keeps pushing toward full autonomy. The evidence keeps pointing at amplification. The exoskeleton beats the autopilot. The curated skill beats the self-generated one. The semantic layer beats the raw prompt. Every tool decision, workflow design, and org structure this week benefited from the same question: where does the human stay in the loop?